Usually, attackers scan websites looking for information about the implemented software stack, for example:

- WebServer

- Programming Language

- Framework Version

- Database Information

- Debugging Information

That information is used to figure out how to compromise a website.

If your website is exposing the stack of technology, probably it's a good target for attackers.

In this post, I'll put some common ways that web applications leak valuable information.

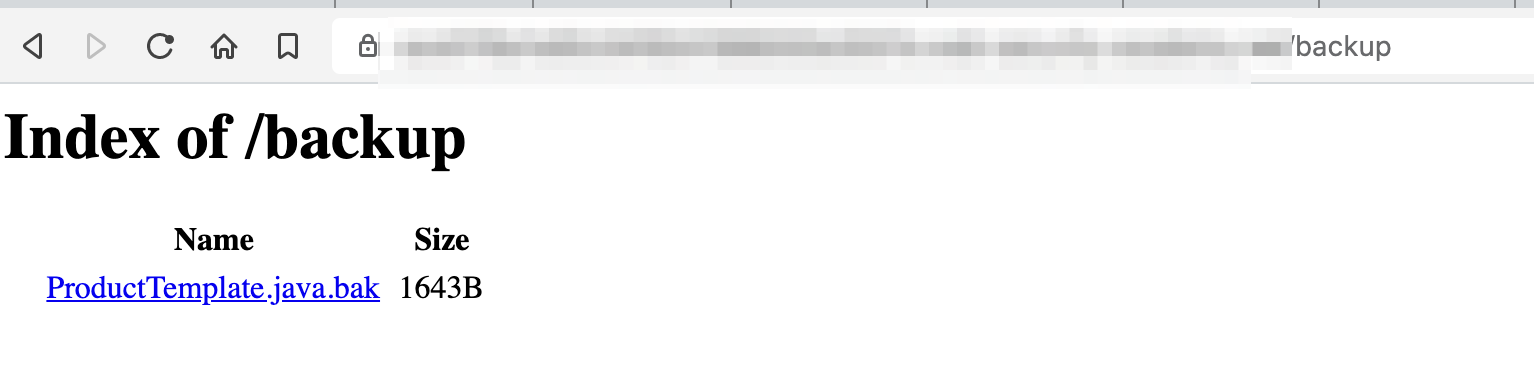

robots.txt file

This is one of the first elements I check when I do a Penetration Testing in Web Applications.

The idea behind the Robots Exclusion Protocol (or just robots.txt) is simple: stop search engines from indexing the sections of your website that you don’t want showing up on search engines.

The presence of robots.txt does not represent a security vulnerability, but sometimes, developers add private resources that may help attackers to map sensitive information. Check the following example:

The bottom line here, is that robots.txt will not only be read by search engines, but also by humans.

Remember, robots.txt tells attackers the sections on your website you don't want them to look. :)

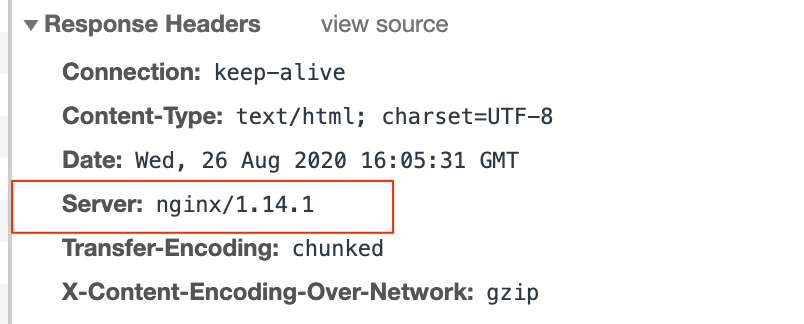

Server Headers

By default, most of the web servers return a server header in the responses. This information is useless for the web browser and clients and basically it only says to the cyber criminals what vulnerabilities they can probe for.

Make sure to disable any HTTP response headers in your web server configuration that reveal the server technology, language, version.

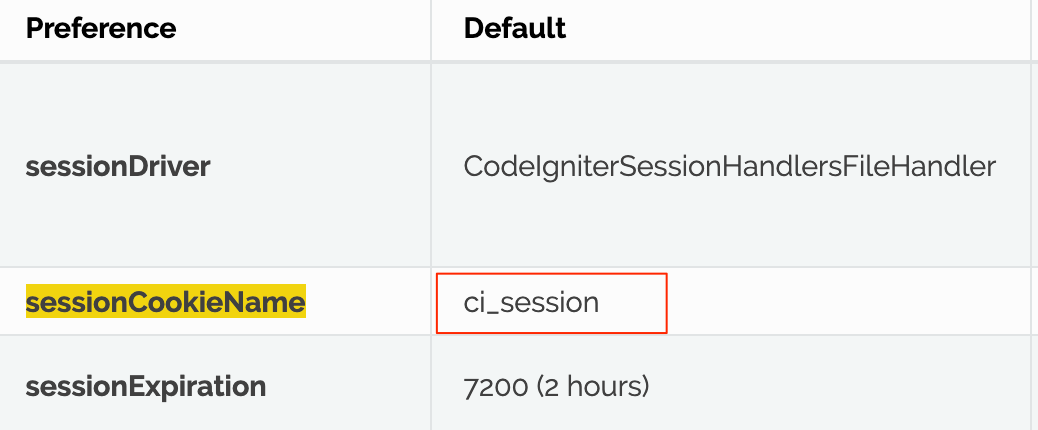

Default Cookie Parameters

Cookies are used to store information, example the session state. The default name of the cookies frequently reveals the server-side technology. Example, Java web servers use a cookie named JSESSIONID. In this way attackers can identify the technology. It's recommendable to change the generic names for session cookies.

Client-side error reporting

Client-side error reporting is useful for development environment since it shows detailed information about an error.

This mode must be disabled in a production environment.

Debugging information can reveal important information, like: technology, libraries, modules, directories, database information.

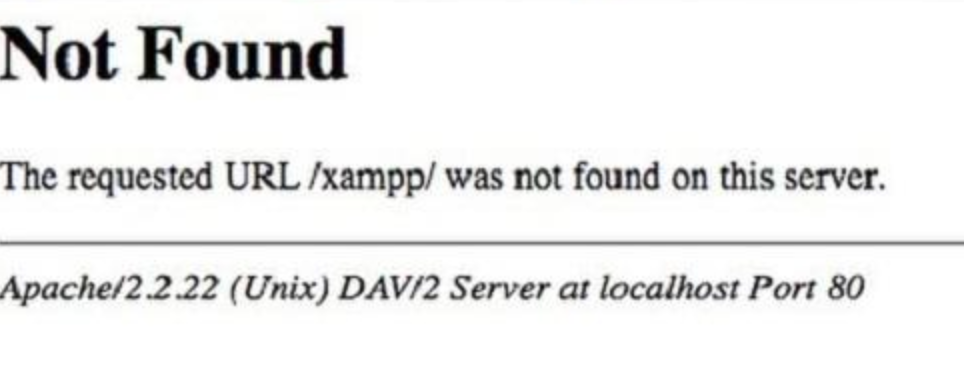

Even error messages can contain sensitive information. The following example shows how a 404 error message contains the Http Server Version and the operative system.

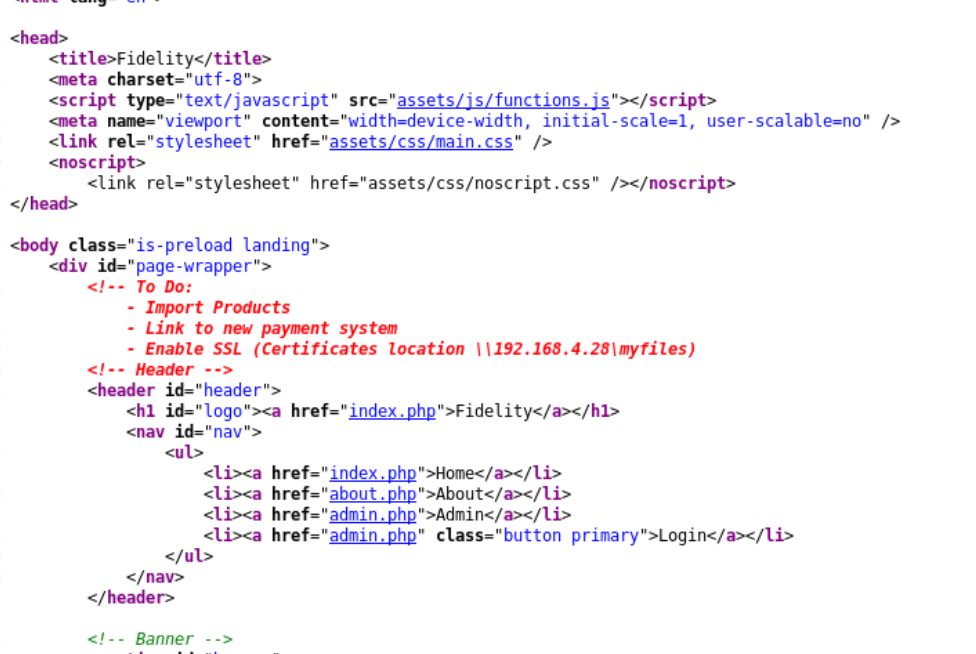

Source Code

It's important to check the source code of HTML and Javascript files. Frequently developers add comments with interesting information and they forget to remove then in production environments.

Before doing a deployment don't forget to sanitize your client-side files. Developers usually minify Javascript because smaller .js files load faster in web browsers but this action has a positive side effect of striping comments.

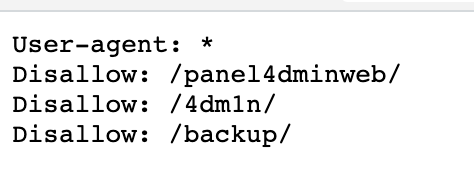

Directory Listing

When directory listing is enabled in a web server and there isn't a index file in a website directory it will display the list of files in the directory.

Some developers assume that, if there are no links to files in a directory, nobody can access them. This is wrong. There are many scanning tools (gobuster, dirbuster, dirb) and wordlists that easily discover those directories and all files if directory listing is enabled.